Create your own magic with Web 7.0 DIDLibOS™ / TDW AgenticOS™. Imagine the possibilities.

Copyright © 2026 Michael Herman (Bindloss, Alberta, Canada) – Creative Commons Attribution-ShareAlike 4.0 International Public License

Web 7.0™, Web 7.0 DILibOS™, TDW AgenticOS™, TDW™, Trusted Digital Web™ and Hyperonomy™ are trademarks of the Web 7.0 Foundation. All Rights Reserved.

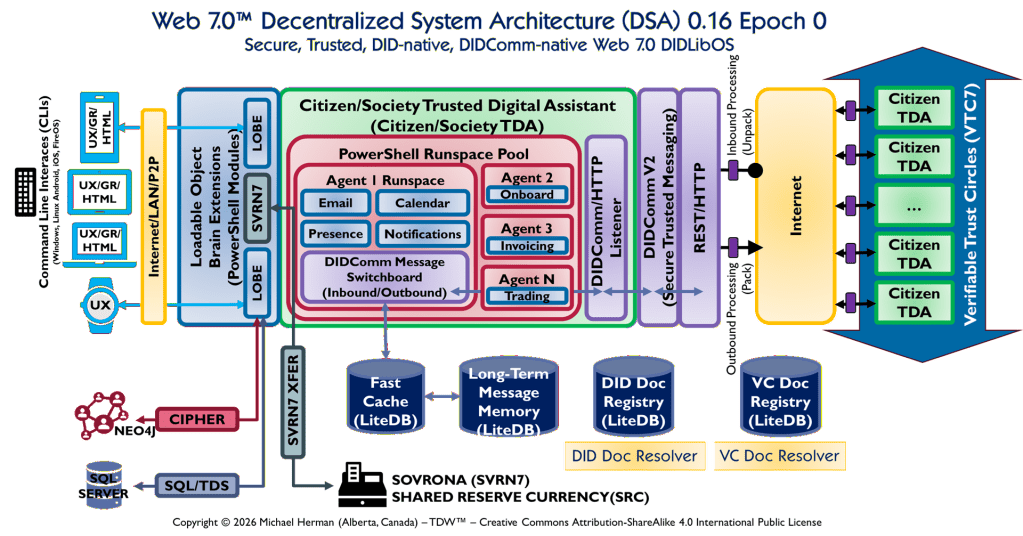

Parchment Programming is an architecture-first software development methodology where a richly annotated visual diagram — the “parchment” — serves as the primary design document and intermediate representation (IR) that an AI coding assistant (like Claude) reads directly to generate correct, idiomatic code.

Rather than translating requirements through layers of prose specifications, the diagram itself encodes stereotypes, interface contracts, project boundaries, data models, and protocol annotations in a form that is simultaneously human-readable and AI-actionable — invented by Michael Herman, Chief Digital Officer, Web 7.0 Foundation. April 2026.

This article presents a structured analysis as well as the key optimization dimensions for diagrams used for Parchment Programming.

Part 2 can be found here: https://hyperonomy.com/2026/04/09/parchment-programming-diagramic-design-document-intermediate-representation-optimization-part-2/

Parchment Programming Methodology: https://hyperonomy.com/2026/04/09/parchment-programming-methodology/

Claude Prompt

How can this #ParchmentProgrammimg diagram be optimized as a design document/intermediate representation specification for an AI-coded software system?

What the Diagram Does Well Already

- Layered containment (TDA > Runspace Pool > Agent Runspaces) maps cleanly to class/module hierarchies an AI can scaffold

- Named protocols (DIDComm V2, REST/HTTP, SQL/TDS, CIPHER) give an AI concrete interface contracts to target

- Technology bindings (LiteDB, NEO4J, SQL Server, PowerShell Modules) eliminate ambiguity in dependency selection

- Directional flow (Inbound Unpack → Switchboard → Outbound Pack) implies a pipeline pattern the AI can instantiate

- Multiplicity hints (Agent 1…N, Citizen TDA ×4+) signal collection types and polymorphism requirements

The Six Optimization Gaps

1. Missing Cardinality & Lifecycle Annotations

The diagram shows what exists but not how many or when. An AI needs to know:

- Is the Runspace Pool fixed-size or elastic? Min/max pool size?

- Are LOBEs loaded at startup or on-demand?

- Do Agent Runspaces share state or are they fully isolated?

Fix: Add a small Component Fact Table inset — one row per major component with columns: multiplicity | lifecycle (singleton/transient/pooled) | owns-state? | thread-safe?

2. Interface Contracts Are Implied, Not Declared

The AI sees “DIDComm/HTTP Listener” but has no method signatures, message schemas, or error contracts. The connector arrows carry no payload type.

Fix: Add stereotypes on connectors — e.g., label each arrow with «sends: DIDCommEnvelope» or «protocol: REST/JSON-LD». Even a short legend table mapping each arrow color/style to a message type and schema reference would let an AI generate correct interface stubs.

3. No Error / Exceptional Flow Representation

The diagram shows the happy path only. An AI coding from this will produce brittle code with no fault boundaries.

Fix: Add a Fault Boundary overlay — dashed red borders around components that must implement retry/circuit-breaker, and a small failure-mode legend: CIPHER fail → ?, LiteDB unavailable → ?, DIDComm unpack error → ?

4. Data Model is Storage-only, Schema-less

Four LiteDB stores are shown (Fast Cache, Long-Term Message Memory, DID Doc Registry, VC Doc Registry) but with no schema, key structure, TTL, or consistency requirements called out. The AI will invent schemas.

Fix: Add a Data Contract sidebar with one mini-schema per store — just the primary key pattern, top 3–5 fields, and TTL/eviction policy. For DID Doc Registry and VC Doc Registry this is especially important since did:drn and VC structure are normative.

5. Security / Trust Boundary is Structural but Not Behavioral

The CIPHER block and VTC7 boundary appear but the enforcement rules are invisible. When does encryption apply? Who authorizes a new LOBE load? What validates a VC before it enters the Runspace?

Fix: Add a Trust Boundary Annotation layer — color-coded zones (already partially present with the green/purple/yellow regions) with an explicit legend: green = TDA-internal trust, purple = DIDComm-authenticated, yellow = internet-untrusted. Add one-line policy statements per zone boundary crossing.

6. No Prompt-Injection Anchors (the Core #ParchmentProgramming Gap)

This is the critical one for AI-coded systems. The diagram has no named generation targets — no indication of which boxes map to which code artifacts, which interfaces must be hand-authored vs. AI-generated, or what the acceptance criteria are per component.

Fix: Add an AI Codegen Manifest — a table in the document (not necessarily in the diagram itself) with columns:

| Component | Target Artifact | Generation Mode | Acceptance Test |

|---|---|---|---|

| PowerShell Runspace Pool | RunspacePoolManager.cs | AI-generated | Pool min/max respected |

| DIDComm Message Switchboard | DIDCommSwitchboard.cs | AI-scaffolded + hand-completed | Routes all registered message types |

| LOBE loader | LobeLoader.cs | AI-generated | Module isolation verified |

| SVRN7 XFER | Svrn7XferService.cs | Hand-authored | SVRN7 SRC ledger integrity |

Structural Recommendations for the Document Form

If this diagram is the centerpiece of a Parchment Programming spec document, the optimal surrounding structure is:

1. System Identity Block ← version, epoch, DID of this spec doc itself2. This Architecture Diagram ← with the fixes above applied3. Component Fact Table ← multiplicity, lifecycle, ownership4. Connector / Protocol Index ← arrow → schema/interface mapping5. Data Contract Sidebar ← per-store mini-schemas6. Trust Boundary Policy ← zone rules in natural language7. AI Codegen Manifest ← component → artifact → test mapping8. Open Questions Log ← explicit unknowns the AI must not invent

The Open Questions Log (item 8) is underrated — explicitly flagging what is unspecified prevents an AI from silently hallucinating design decisions into generated code.

Quick-Win Priority Order

If you’re iterating the diagram itself (not the surrounding document), the highest-leverage single changes are:

- Label every connector arrow with protocol + payload type — eliminates the largest source of AI ambiguity

- Add lifecycle annotations to the LOBE and Agent Runspace boxes

- Explicitly mark the DIDComm V2 trust boundary as the inbound validation gate

The diagram is already well above average as an AI coding input. These additions would move it from architectural sketch to generatable specification.

Has anyone conceived of anything at all similar to Parchment Programming?

There are adjacent ideas in the ecosystem, but nothing that matches Parchment Programming’s specific inversion. Here’s the honest landscape:

Parchment Programming: What Exists — and Why It’s Different

Diagram-as-Code tools (Structurizr/C4, D2, PlantUML, Mermaid) go in the opposite direction — you write text and a diagram is generated, with elements automatically positioned by a layout engine. The diagram is the output, not the primary authoring artifact.

Swark / Claude Code go code → diagram — based on retrieved source files, an LLM builds a prompt to generate an architecture diagram as documentation. Again, the diagram is a byproduct of code, not a driver of it.

Eraser / DiagramGPT go natural language → diagram → code, but the diagram is an ephemeral intermediate — working with an LLM to create a fleshed-out diagram prompt is the workflow, not treating the diagram itself as an authoritative, annotated specification.

Structurizr comes closest in spirit — AI agents and LLMs excel at generating text, and Structurizr’s model-based consistency makes it a good choice for teams looking to generate C4 model diagrams with AI — but it’s DSL-first, not diagram-first, and carries no notion of the diagram encoding interface contracts or project boundary stereotypes for code generation.

Academic reverse-engineering approaches go code → diagram using LLMs, recovering both static and behavioral architectural views by extracting component diagrams and generating state machine diagrams to model component behavior. Still the wrong direction.

What Makes Parchment Programming Genuinely Novel

The field has thoroughly explored code → diagram and text → diagram → code. What nobody has formalized is the specific claim Parchment Programming makes:

The richly annotated visual diagram is the primary authoritative artifact — encoding stereotypes, interface contracts, project boundaries, and protocol modes — such that an AI reads it directly as an intermediate representation to generate correct, idiomatic code without a prose specification intermediary.

The key differentiators are: (1) the diagram is architecture-first and human-authored, not AI-generated; (2) it carries code-generation semantics embedded in the visual (stereotypes, interface names, DI boundaries); and (3) it replaces the prose spec entirely rather than supplementing it. That combination — as a named, intentional methodology — appears to be original to you.

Part 2 can be found here: https://hyperonomy.com/2026/04/09/parchment-programming-diagramic-design-document-intermediate-representation-optimization-part-2/