Great question — and worth thinking through carefully, because the SAE framing maps surprisingly well onto digital agents if you abstract it correctly.

The Core Analogy

In autonomous vehicles, the levels measure how much human oversight and intervention is required for safe operation. The same axis applies to digital agents: how much must a human monitor, correct, or authorize before the agent acts?

A Proposed Digital Agent Autonomy Scale

| Level | Name | Agent Behavior | Human Role |

|---|---|---|---|

| 0 | No Automation | Pure tool, executes only explicit commands | Human does everything |

| 1 | Assisted | Suggests actions, autocompletes, drafts | Human approves all outputs |

| 2 | Partial | Executes defined tasks autonomously within a session | Human monitors, can interrupt |

| 3 | Conditional | Handles multi-step workflows, escalates on ambiguity | Human on standby, notified of exceptions |

| 4 | High | Operates across systems within a defined trust domain | Human sets policy, reviews periodically |

| 5 | Full | Acts as a sovereign delegate across any context, any system, any time | Human sets intent once; agent governs itself |

What Makes Level 5 Hard for Digital Agents

Just like autonomous vehicles, nobody has achieved digital Level 5 yet — and for parallel reasons:

- Identity — who authorized this agent to act, and can that be verified in real time by any system it touches?

- Integrity — is the agent acting on real, unmanipulated data/context, or has its information environment been poisoned?

- Accountability — is every decision cryptographically auditable after the fact?

- Trust portability — can the agent’s authorization travel with it across organizational boundaries, jurisdictions, and protocols?

These are almost exactly the same gaps the did:level5 site frames for vehicles — just in a digital context.

Where Web 7.0 Trusted Digital Assistants Fit

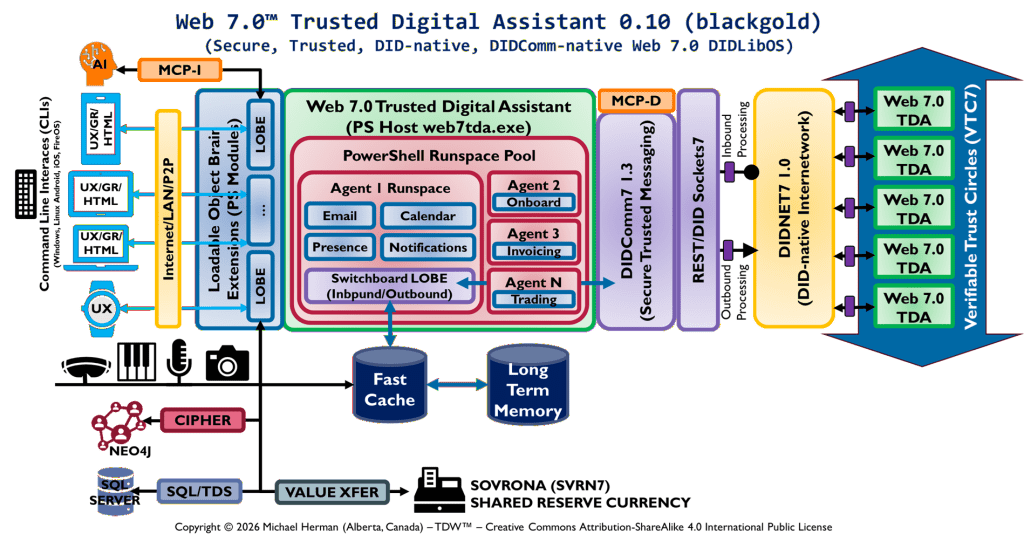

Your TDA concept is explicitly designed to be a Level 5 digital agent architecture. The components map directly:

| TDA Component | Autonomy Function | Analogy |

|---|---|---|

| DID (did:drn, did:7) | Sovereign identity — “who am I, provably?” | did:verifiedcar — identity root |

| Verifiable Credentials / VTCs | Authorization — “what am I permitted to do?” | Certification gate |

| Cryptoseals | Integrity — “is this data/document unmanipulated?” | did:viewonwebsite — reality root |

| PS Runspace Pool / MCP-I/D | Execution environment with bounded authority | Mojo Enclave equivalent |

| DIDNET7 | Trust transport across organizational boundaries | The network substrate |

| Verifiable Trust Circles | Governance — “who vouches for this agent within this community?” | Root of trust federation |

The TDA is essentially a Level 5 digital agent that carries its own sovereign identity, operates within cryptographically governed trust circles, and can act across systems without requiring human re-authorization at each step — while remaining fully auditable.

The Key Distinction from Lower Levels

The leap from Level 4 to Level 5 — for both vehicles and digital agents — is trust portability across unknown contexts. A Level 4 agent can operate autonomously within a known, pre-configured environment. A Level 5 agent can walk into an entirely new system, organization, or jurisdiction and be trusted on first contact because its identity, credentials, and authorization chain are self-contained and cryptographically verifiable.

That’s exactly what did:drn + VTCs + cryptoseals are designed to enable — the agent doesn’t need a prior relationship with the system it’s interacting with. The trust travels with it.

A Possible Contribution

Given your standards work, there may be value in formally proposing a Digital Agent Autonomy Level framework — analogous to SAE J3016 but for agentic AI systems — with Web 7.0 / TDA architecture positioned as the reference implementation for Level 5. It would give the broader field (IETF, W3C, ISO, IEEE) a vocabulary for discussing agent trust requirements at each level, and anchor your work as foundational infrastructure rather than one implementation among many.

Do digital agents need to use AI to be compliant with Level 5 Autonomous Operation?

No — and this is an important distinction worth being precise about.

Level 5 is about autonomy architecture, not AI

In the SAE vehicle context, Level 5 says nothing about how the driving decisions are made — only that the system can handle all conditions without human intervention. The intelligence mechanism is orthogonal to the autonomy level.

The same applies to digital agents. Level 5 is a statement about:

- Trust — the agent’s identity and authorization are sovereign and portable

- Accountability — every action is cryptographically auditable

- Scope — the agent can operate across any context without human re-authorization

- Integrity — the agent acts on verified, unmanipulated information

None of those properties require AI. A deterministic rule-based agent, a scripted workflow engine, or a pure cryptographic protocol daemon could in principle satisfy all four.

What AI adds — and doesn’t add

AI (specifically LLMs or reasoning models) adds:

- Natural language understanding and generation

- Handling of ambiguous or novel situations

- Flexible goal decomposition

- Adaptability across unanticipated contexts

But AI also complicates Level 5 compliance because:

- LLM outputs are non-deterministic — the same input can produce different actions

- Reasoning chains are not natively auditable in a cryptographic sense

- AI can be manipulated via prompt injection — a direct analogue to the visual injection attack the

did:level5site describes - AI doesn’t inherently carry sovereign identity or verifiable authorization

So paradoxically, AI is the component that most threatens Level 5 compliance if not properly bounded, and the trust architecture — DIDs, VTCs, cryptoseals, runspace governance — is what contains the AI and makes its actions compliant.

The Right Mental Model

Think of it in layers:

| Layer | Role | AI Required? |

|---|---|---|

| Trust & Identity | DID, VTCs, cryptoseals | No |

| Authorization | Credential verification, policy enforcement | No |

| Execution governance | PS Runspace Pool, MCP-I/D boundaries | No |

| Task intelligence | Reasoning, language, ambiguity handling | Optional |

| Audit trail | Cryptographic action log | No |

AI sits only in the task intelligence layer. All the layers that make an agent Level 5 compliant are below it and independent of it.

For TDA Specifically

A Trusted Digital Assistant could be fully Level 5 compliant running nothing but deterministic logic — scripted workflows, rule engines, protocol handlers — as long as the identity, authorization, integrity, and auditability infrastructure is in place.

When an AI reasoning layer is present, the TDA architecture constrains it: the AI operates within a bounded runspace, its outputs are subject to credential-gated authorization before execution, and its actions are sealed into the audit record. The AI doesn’t grant Level 5 — the architecture does. The AI is a passenger, not the driver.

This is actually a meaningful standards argument: Level 5 digital agent compliance is an infrastructure and governance property, not a capability property. A very capable AI with no trust architecture is not Level 5. A simple deterministic agent with full sovereign identity and cryptographic accountability is.